Multi-threaded performance. Pitfalls презентация

Содержание

- 2. License Copyright © 2008 Ciaran McHale. Permission is hereby granted, free

- 3. Purpose of this presentation Some issues in multi-threading are counter-intuitive Ignorance

- 4. 1. A case study

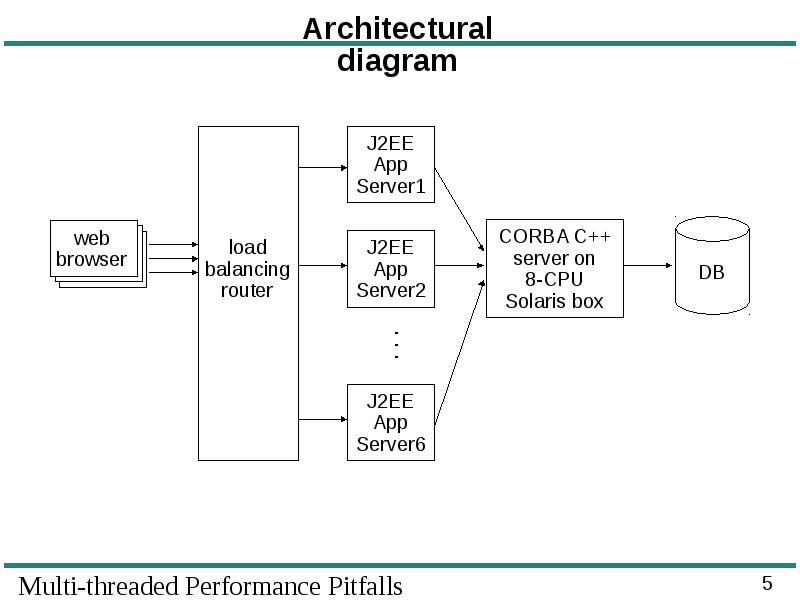

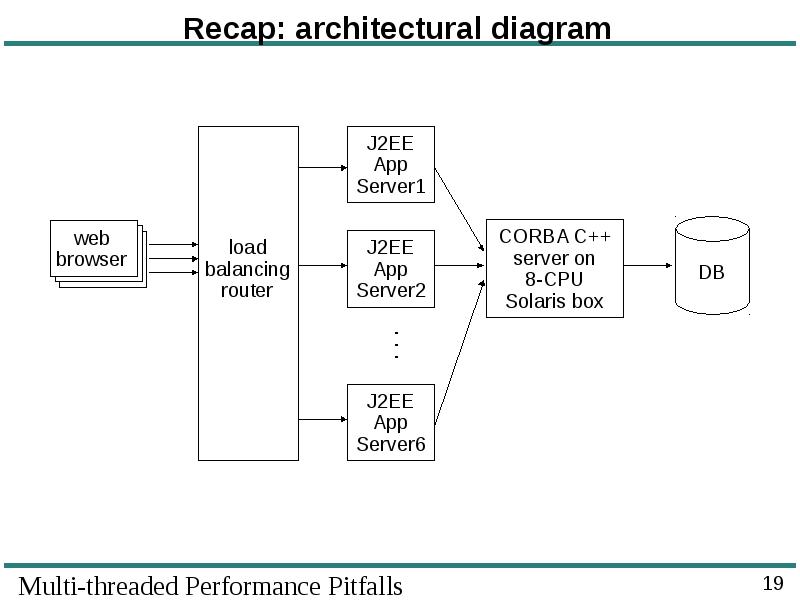

- 5. Architectural diagram

- 6. Architectural notes The customer felt J2EE was slower than CORBA/C++ So,

- 7. Strange problems were observed Throughput of the CORBA server decreased as

- 8. 2. Analysis of the problems

- 9. What went wrong? Investigation showed that scalability problems were caused by

- 10. Cache consistency RAM access is much slower than speed of CPU

- 11. Cache consistency (cont’) Overhead of cache consistency protocols worsens as: Overhead

- 12. Unfair mutex wakeup semantics A mutex does not guarantee First In

- 13. Unfair mutex wakeup semantics (cont’) Why does a mutex not provide

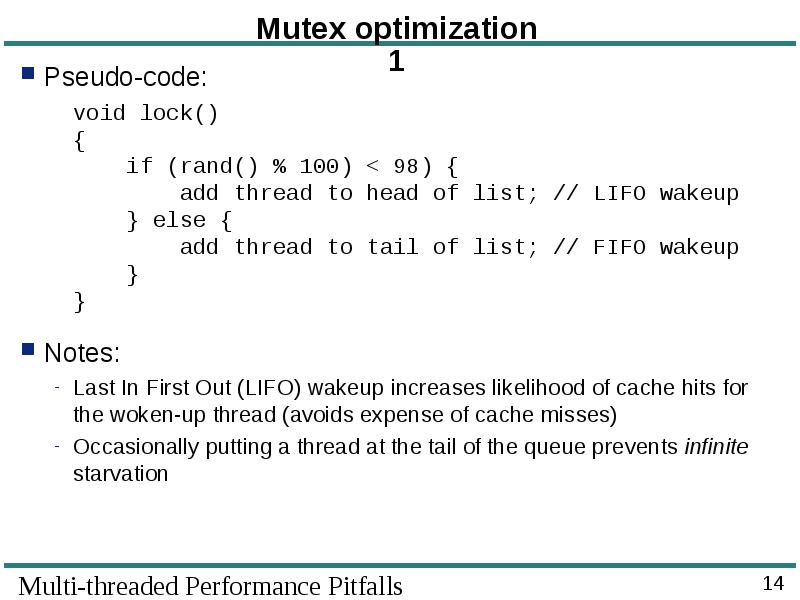

- 14. Mutex optimization 1 Pseudo-code: void lock() { if (rand() %

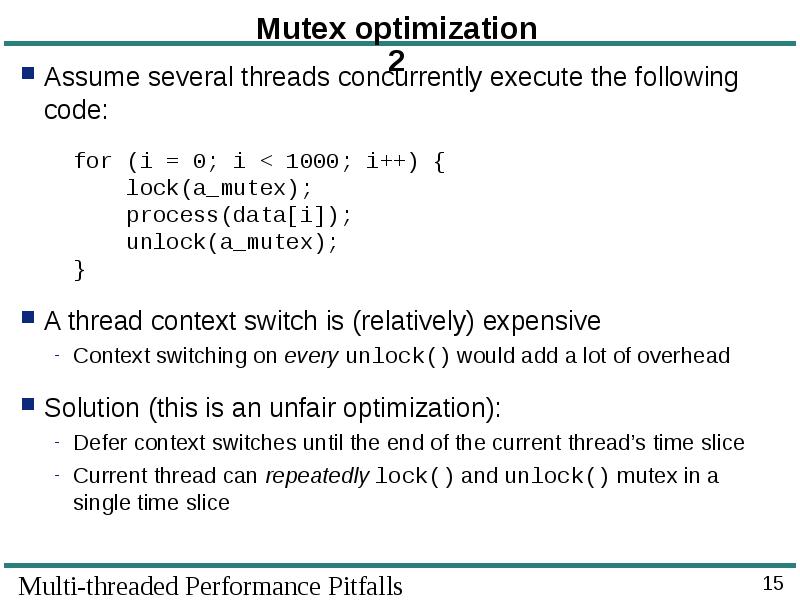

- 15. Mutex optimization 2 Assume several threads concurrently execute the following code:

- 16. 3. Improving Throughput

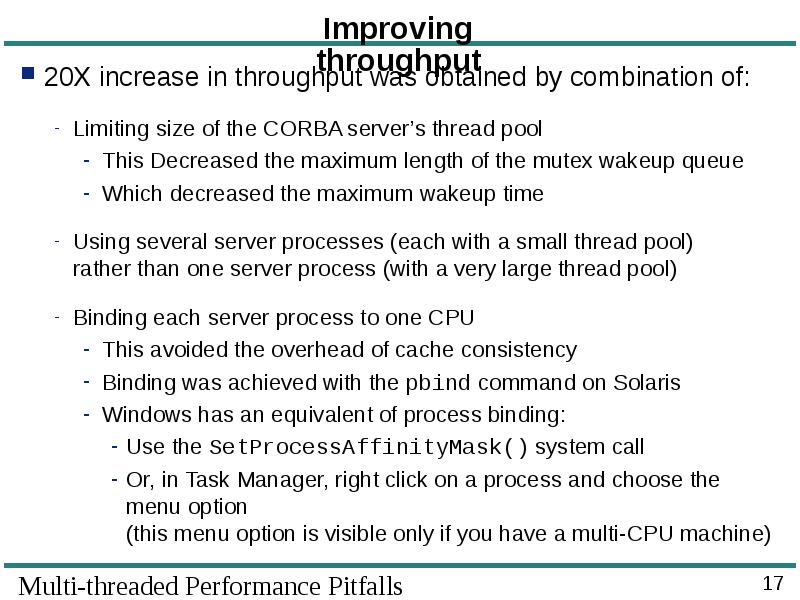

- 17. Improving throughput 20X increase in throughput was obtained by combination of:

- 18. 4. Finishing up

- 19. Recap: architectural diagram

- 20. The case study is not an isolated incident The project’s high-level

- 21. Summary: important things to remember Recognize danger signs: Performance drops as

- 22. Скачать презентацию

Слайды и текст этой презентации

Скачать презентацию на тему Multi-threaded performance. Pitfalls можно ниже:

Похожие презентации